Difference between revisions of "Aufgaben:Exercise 1.14: Bhattacharyya Bound for BEC"

| Line 77: | Line 77: | ||

- It holds ${\rm Pr}[\underline{x}_{0} → \underline{x}_{i}] \ = \ \lambda ^{d_{{\rm H},\hspace{0.05cm}i}} \ · \ (1 – \lambda)^{n \hspace{0.05cm}– \hspace{0.05cm}d_{{\rm H},\hspace{0.05cm}i}}$. | - It holds ${\rm Pr}[\underline{x}_{0} → \underline{x}_{i}] \ = \ \lambda ^{d_{{\rm H},\hspace{0.05cm}i}} \ · \ (1 – \lambda)^{n \hspace{0.05cm}– \hspace{0.05cm}d_{{\rm H},\hspace{0.05cm}i}}$. | ||

+ It holds ${\rm Pr}[\underline{x}_{0} → \underline{x}_{i}] \ = \ 1/2 · \lambda ^{d_{{\rm H},\hspace{0.05cm}i}}.$ | + It holds ${\rm Pr}[\underline{x}_{0} → \underline{x}_{i}] \ = \ 1/2 · \lambda ^{d_{{\rm H},\hspace{0.05cm}i}}.$ | ||

| − | - ${\rm Pr}[\underline{x}_{0} → \underline{x}_{i}]$ is the falsification probability from $x_{0}$ to $x_{i}$. | + | - ${\rm Pr}[\underline{x}_{0} → \underline{x}_{i}]$ is the falsification probability from $x_{0}$ to $x_{i}$. |

{What are the following probabilities? | {What are the following probabilities? | ||

| Line 96: | Line 96: | ||

===Solution=== | ===Solution=== | ||

{{ML-Kopf}} | {{ML-Kopf}} | ||

| − | '''(1)''' The code words $\underline{x}_{0}$ and $\underline{x}_{1}$ differ in bit $2, \ 4$ and $5$. If only one of these three binary values is transmitted correctly, the entire | + | '''(1)''' The code words $\underline{x}_{0}$ and $\underline{x}_{1}$ differ in bit $2, \ 4$ and $5$. If only one of these three binary values is transmitted correctly, the entire code word is thus uniquely determined. No information about the code word is obtained for the following received vectors (see table on the information page): |

| − | * $\underline{y} = (0, {\rm E}, 0, {\rm E}, {\rm E})$ | + | * $\underline{y} = (0, {\rm E}, 0, {\rm E}, {\rm E})$ with probability $\lambda^3 \ · \ (1 – \lambda)^2$, |

| − | * $\underline{y} = (0, {\rm E}, {\rm E}, {\rm E}, {\rm E})$ with probability $\lambda^4 \ · \ (1 – \lambda)$, | + | * $\underline{y} = (0, {\rm E}, {\rm E}, {\rm E}, {\rm E})$ with probability $\lambda^4 \ · \ (1 – \lambda)$, |

| − | * $\underline{y} = ({\rm E}, {\rm E}, 0, {\rm E}, {\rm E})$ with probability $\lambda^4 \ · \ (1 – \lambda)$, | + | * $\underline{y} = ({\rm E}, {\rm E}, 0, {\rm E}, {\rm E})$ with probability $\lambda^4 \ · \ (1 – \lambda)$, |

| − | * $\underline{y} = ({\rm E}, {\rm E}, {\rm E}, {\rm E}, {\rm E})$ with probability $\lambda^5$. | + | * $\underline{y} = ({\rm E}, {\rm E}, {\rm E}, {\rm E}, {\rm E})$ with probability $\lambda^5$. |

| − | The probability that | + | The probability that due to the specific received vector $\underline{y}$, the code word $\underline{x}_{1}$ is just as likely as $\underline{x}_{0}$, is given by |

:$$\ {\rm Pr}\ [\underline{x}_0 \hspace{0.12cm}{\rm and}\hspace{0.12cm} \underline{x}_1 \hspace{0.15cm}{\rm are \hspace{0.15cm}equally \hspace{0.15cm}probable}] = \lambda^3 \cdot (1- \lambda)^2 + 2 \cdot \lambda^4 \cdot (1- \lambda) + \lambda^5 =\lambda^3 \cdot \left [ (1- \lambda)^2 + 2 \cdot \lambda \cdot (1- \lambda) + \lambda^2 \right ] = \lambda^3 \hspace{0.05cm}.$$ | :$$\ {\rm Pr}\ [\underline{x}_0 \hspace{0.12cm}{\rm and}\hspace{0.12cm} \underline{x}_1 \hspace{0.15cm}{\rm are \hspace{0.15cm}equally \hspace{0.15cm}probable}] = \lambda^3 \cdot (1- \lambda)^2 + 2 \cdot \lambda^4 \cdot (1- \lambda) + \lambda^5 =\lambda^3 \cdot \left [ (1- \lambda)^2 + 2 \cdot \lambda \cdot (1- \lambda) + \lambda^2 \right ] = \lambda^3 \hspace{0.05cm}.$$ | ||

| − | In this case, one decides at random for $\underline{x}_{0}$ (would be correct) or for $\underline{x}_{1}$ (unfortunately wrong), with equal probability. From this follows: | + | In this case, one decides at random for $\underline{x}_{0}$ (would be correct) or for $\underline{x}_{1}$ (unfortunately wrong), with equal probability. From this follows: |

:$${\rm Pr} [\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}1}] = 1/2 \cdot \lambda^3 \hspace{0.15cm} \underline{= 0.5 \cdot 10^{-3}} \hspace{0.05cm}.$$ | :$${\rm Pr} [\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}1}] = 1/2 \cdot \lambda^3 \hspace{0.15cm} \underline{= 0.5 \cdot 10^{-3}} \hspace{0.05cm}.$$ | ||

| Line 113: | Line 113: | ||

| − | '''(2)''' According to subtask '''(1)''', <u>answer 2</u> is correct | + | '''(2)''' According to subtask '''(1)''', <u>only answer 2</u> is correct: |

| − | *${\rm Pr}[\underline{x}_{0} → \underline{x}_{1}]$ does not state that with this probability the | + | *${\rm Pr}[\underline{x}_{0} → \underline{x}_{1}]$ does not state that with this probability the code word $\underline{x}_{0}$ actually transitions to the incorrect code word $\underline{x}_{1}$, but only that it could transition to $\underline{x}_{1}$ with this probability. |

| − | * ${\rm Pr}[\underline{x}_{0} → \underline{x}_{1}]$ also includes constellations where the decision is actually for $\underline{x}_{2}$ or $\underline{x}_{3}$. | + | |

| + | * ${\rm Pr}[\underline{x}_{0} → \underline{x}_{1}]$ also includes constellations where the decision is actually for $\underline{x}_{2}$ or $\underline{x}_{3}$. | ||

| Line 122: | Line 123: | ||

'''(3)''' Because of $d_{\rm H}(\underline{x}_{0}, \underline{x}_{2}) = 3$ and $d_{\rm H}(\underline{x}_{0}, \underline{x}_{3}) = 4$ it follows that | '''(3)''' Because of $d_{\rm H}(\underline{x}_{0}, \underline{x}_{2}) = 3$ and $d_{\rm H}(\underline{x}_{0}, \underline{x}_{3}) = 4$ it follows that | ||

| − | :$${\rm Pr} [\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}2}] = 1/2 \cdot \lambda^3 \hspace{0.15cm} \underline{= 0.5 \cdot 10^{-3}} \hspace{0.05cm}, | + | :$${\rm Pr} [\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}2}] = 1/2 \cdot \lambda^3 \hspace{0.15cm} \underline{= 0.5 \cdot 10^{-3}} \hspace{0.05cm},$$ |

| + | :$$ {\rm Pr} [\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}3}] = 1/2 \cdot \lambda^4 \hspace{0.15cm} \underline{= 0.05 \cdot 10^{-3}} \hspace{0.05cm}.$$ | ||

| − | '''(4)''' The block error probability is never larger (with a certain probability rather smaller) than the so-called | + | '''(4)''' The block error probability is never larger (with a certain probability rather smaller) than the so-called "Union Bound": |

:$${\rm Pr(Union \hspace{0.15cm}Bound)} \hspace{-0.15cm}\ = \ \hspace{-0.15cm} {\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm}\underline{x}_{\hspace{0.02cm}1}] +{\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm} \underline{x}_{\hspace{0.02cm}2}] +{\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm} \underline{x}_{\hspace{0.02cm}3}] = 2 \cdot \lambda^3/2 + \lambda^4/2 = 0.001 + 0.00005 \hspace{0.15cm} \underline{= 1.05 \cdot 10^{-3}} \hspace{0.05cm}.$$ | :$${\rm Pr(Union \hspace{0.15cm}Bound)} \hspace{-0.15cm}\ = \ \hspace{-0.15cm} {\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm}\underline{x}_{\hspace{0.02cm}1}] +{\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm} \underline{x}_{\hspace{0.02cm}2}] +{\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm} \underline{x}_{\hspace{0.02cm}3}] = 2 \cdot \lambda^3/2 + \lambda^4/2 = 0.001 + 0.00005 \hspace{0.15cm} \underline{= 1.05 \cdot 10^{-3}} \hspace{0.05cm}.$$ | ||

| Line 135: | Line 137: | ||

:$${\rm Pr(block\:error) ≤ {\rm Pr(Union \hspace{0.15cm}Bound)} \le Pr(Bhattacharyya)} = W(\beta) - 1.$$ | :$${\rm Pr(block\:error) ≤ {\rm Pr(Union \hspace{0.15cm}Bound)} \le Pr(Bhattacharyya)} = W(\beta) - 1.$$ | ||

| − | *For the distance spectrum or the weight function, we obtain in the present case: | + | *For the distance spectrum or the enumerator weight function, we obtain in the present case: |

:$$W_0 = 1 \hspace{0.05cm}, \hspace{0.2cm} W_3 = 2 \hspace{0.05cm}, \hspace{0.2cm}W_4 = 1 \hspace{0.3cm} \Rightarrow \hspace{0.3cm} W(X) = 1+ 2 \cdot X^{3} +X^{4} \hspace{0.05cm}.$$ | :$$W_0 = 1 \hspace{0.05cm}, \hspace{0.2cm} W_3 = 2 \hspace{0.05cm}, \hspace{0.2cm}W_4 = 1 \hspace{0.3cm} \Rightarrow \hspace{0.3cm} W(X) = 1+ 2 \cdot X^{3} +X^{4} \hspace{0.05cm}.$$ | ||

| − | *For the BEC channel, $\beta = \lambda$ also applies. From this follows as a final result for $\lambda = 0.001$: | + | *For the BEC channel, $\beta = \lambda$ also applies. From this follows as a final result for $\lambda = 0.001$: |

:$${\rm Pr(Bhattacharyya)} = 2 \cdot \lambda^3 + \lambda^4 \hspace{0.15cm} \underline{= 2.1 \cdot 10^{-3}} \hspace{0.05cm}.$$ | :$${\rm Pr(Bhattacharyya)} = 2 \cdot \lambda^3 + \lambda^4 \hspace{0.15cm} \underline{= 2.1 \cdot 10^{-3}} \hspace{0.05cm}.$$ | ||

| − | Note that | + | *Note that with the BEC model, the "Bhattacharyya Bound" is always twice as large as the "Union Bound", which is itself an upper bound on the block error probability. |

{{ML-Fuß}} | {{ML-Fuß}} | ||

Revision as of 16:49, 4 August 2022

In this exercise, we consider the systematic $(5, 2)$ code

- with the $2×5$ generator matrix

- $${ \boldsymbol{\rm G}}_{(5, 2)} = \begin{pmatrix} 1 &0 &1 &1 &0\\ 0 &1 &0 &1 &1 \end{pmatrix} \hspace{0.05cm},$$

- the $3 × 5$ parity-check matrix

- $${ \boldsymbol{\rm H}}_{(5, 2)} = \begin{pmatrix} 1 &0 &1 &0 &0\\ 1 &1 &0 &1 &0\\ 0 &1 &0 &0 &1 \end{pmatrix} \hspace{0.05cm},$$

- and the $2^k = 4$ code words

- $$\underline{x}_0 \hspace{-0.15cm}\ = \ \hspace{-0.15cm} (0, 0, 0, 0, 0) \hspace{0.05cm},\hspace{0.2cm}\underline{x}_1 = (0, 1, 0, 1, 1)\hspace{0.05cm},\hspace{0.2cm}\underline{x}_2 \hspace{-0.15cm}\ = \ \hspace{-0.15cm} (1, 0, 1, 1, 0) \hspace{0.05cm},\hspace{0.2cm}\underline{x}_3 = (1, 1, 1, 0, 1)\hspace{0.05cm}.$$

At the output of the digital channel defined by the "BEC model" ("Binary Erasure Channel") with the erasure probability $\lambda = 0.001$, the received vector

- $$\underline{y} = (y_1, \hspace{0.05cm}y_2, \hspace{0.05cm}y_3, \hspace{0.05cm}y_4, \hspace{0.05cm}y_5)$$

occurs, where for $i = 1, \ \text{...} \ , 5$ holds:

- $$y_{i} \in \{0, 1, \rm E\}.$$

The BEC channel is characterized by the fact that

- falsifications $(0 → 1,\ 1 → 0)$ are excluded,

- but erasures $(0 → \rm E,\ 1 → E)$ may occur.

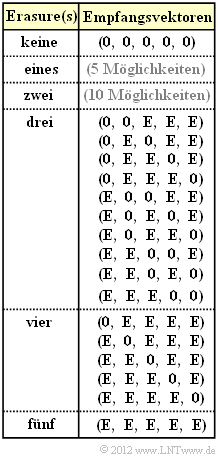

The graph explicitly shows all possible received vectors $\underline{y}$ with three or more erasures $\rm (E)$ assuming that the all-zero vector $(0, 0, 0, 0, 0)$ was sent.

- For less than three erausures, for the considered $(5, 2)$ code, the "code word finder" always returns the correct decision: $\underline{z} = \underline{x}$.

- On the other hand, if there are three or more erasures, wrong decisions may occur. In this case, the following applies to the block error probability:

- $$ {\rm Pr(block\:error)}= {\rm Pr} (\underline{z} \ne \underline{x}) = {\rm Pr}\left \{ \hspace{0.1cm} [\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}1}] \hspace{0.05cm}\cup\hspace{0.05cm}[\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}2}] \hspace{0.05cm}\cup \hspace{0.05cm}[\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}3}] \hspace{0.1cm}\right \} \hspace{0.05cm}.$$

Please note:

- The event $[\underline{x}_{0} → \underline{x}_{1}]$ does not necessarily say that at the received vector under consideration $\underline{y}$ is actually decided for the codeword $\underline{x}_{1}$, but only that the decision for $x_{1}$ would be more reasonable than the decision for $\underline{x}_{0}$ due to statistics.

- But it could also be decided for $\underline{x}_{2}$ or $\underline{x}_{3}$ if the "maximum-likelihood criterion" is in favor.

Computing the block error probability is difficult because the events $[\underline{x}_{0} → \underline{x}_{1}]$ , $[\underline{x}_{0} → \underline{x}_{2}]$ and $[\underline{x}_{0} → \underline{x}_{3}]$ are not necessarily "disjoint" . An upper bound is provided by the "Union Bound":

- $${\rm Pr(Union \hspace{0.15cm}Bound)} = {\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm}\underline{x}_{\hspace{0.02cm}1}] +{\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm} \underline{x}_{\hspace{0.02cm}2}] +{\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm} \underline{x}_{\hspace{0.02cm}3}] \ge {\rm Pr(block\hspace{0.15cm}error)} \hspace{0.05cm}.$$

Another bound was given by Bhattacharyya:

- $${\rm Pr(Bhattacharyya)} = W(\beta)-1 \ge {\rm Pr(Union \hspace{0.15cm}Bound)} \ge {\rm Pr(block\:error)} \hspace{0.05cm},$$

where for the binary erasure channel, the Bhattacharyya parameter $\beta = \lambda$ and the "weight enumerator function" $W(X)$ where the pseudo-variable $X$ is to be replaced here by the Bhattacharyya parameter $\lambda$.

- The "Bhattacharyya Bound" is more or less far above the "Union Bound" depending on the channel.

- Its importance lies in the fact that the bound can be specified in the same way for different channels.

Hints: The exercise belongs to the chapter "Bounds on block error probability".

Questions

Solution

- $\underline{y} = (0, {\rm E}, 0, {\rm E}, {\rm E})$ with probability $\lambda^3 \ · \ (1 – \lambda)^2$,

- $\underline{y} = (0, {\rm E}, {\rm E}, {\rm E}, {\rm E})$ with probability $\lambda^4 \ · \ (1 – \lambda)$,

- $\underline{y} = ({\rm E}, {\rm E}, 0, {\rm E}, {\rm E})$ with probability $\lambda^4 \ · \ (1 – \lambda)$,

- $\underline{y} = ({\rm E}, {\rm E}, {\rm E}, {\rm E}, {\rm E})$ with probability $\lambda^5$.

The probability that due to the specific received vector $\underline{y}$, the code word $\underline{x}_{1}$ is just as likely as $\underline{x}_{0}$, is given by

- $$\ {\rm Pr}\ [\underline{x}_0 \hspace{0.12cm}{\rm and}\hspace{0.12cm} \underline{x}_1 \hspace{0.15cm}{\rm are \hspace{0.15cm}equally \hspace{0.15cm}probable}] = \lambda^3 \cdot (1- \lambda)^2 + 2 \cdot \lambda^4 \cdot (1- \lambda) + \lambda^5 =\lambda^3 \cdot \left [ (1- \lambda)^2 + 2 \cdot \lambda \cdot (1- \lambda) + \lambda^2 \right ] = \lambda^3 \hspace{0.05cm}.$$

In this case, one decides at random for $\underline{x}_{0}$ (would be correct) or for $\underline{x}_{1}$ (unfortunately wrong), with equal probability. From this follows:

- $${\rm Pr} [\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}1}] = 1/2 \cdot \lambda^3 \hspace{0.15cm} \underline{= 0.5 \cdot 10^{-3}} \hspace{0.05cm}.$$

(2) According to subtask (1), only answer 2 is correct:

- ${\rm Pr}[\underline{x}_{0} → \underline{x}_{1}]$ does not state that with this probability the code word $\underline{x}_{0}$ actually transitions to the incorrect code word $\underline{x}_{1}$, but only that it could transition to $\underline{x}_{1}$ with this probability.

- ${\rm Pr}[\underline{x}_{0} → \underline{x}_{1}]$ also includes constellations where the decision is actually for $\underline{x}_{2}$ or $\underline{x}_{3}$.

(3) Because of $d_{\rm H}(\underline{x}_{0}, \underline{x}_{2}) = 3$ and $d_{\rm H}(\underline{x}_{0}, \underline{x}_{3}) = 4$ it follows that

- $${\rm Pr} [\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}2}] = 1/2 \cdot \lambda^3 \hspace{0.15cm} \underline{= 0.5 \cdot 10^{-3}} \hspace{0.05cm},$$

- $$ {\rm Pr} [\underline{x}_{\hspace{0.02cm}0} \mapsto \underline{x}_{\hspace{0.02cm}3}] = 1/2 \cdot \lambda^4 \hspace{0.15cm} \underline{= 0.05 \cdot 10^{-3}} \hspace{0.05cm}.$$

(4) The block error probability is never larger (with a certain probability rather smaller) than the so-called "Union Bound":

- $${\rm Pr(Union \hspace{0.15cm}Bound)} \hspace{-0.15cm}\ = \ \hspace{-0.15cm} {\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm}\underline{x}_{\hspace{0.02cm}1}] +{\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm} \underline{x}_{\hspace{0.02cm}2}] +{\rm Pr}[\underline{x}_{\hspace{0.02cm}0} \hspace{-0.02cm}\mapsto \hspace{-0.02cm} \underline{x}_{\hspace{0.02cm}3}] = 2 \cdot \lambda^3/2 + \lambda^4/2 = 0.001 + 0.00005 \hspace{0.15cm} \underline{= 1.05 \cdot 10^{-3}} \hspace{0.05cm}.$$

(5) Generally speaking:

- $${\rm Pr(block\:error) ≤ {\rm Pr(Union \hspace{0.15cm}Bound)} \le Pr(Bhattacharyya)} = W(\beta) - 1.$$

- For the distance spectrum or the enumerator weight function, we obtain in the present case:

- $$W_0 = 1 \hspace{0.05cm}, \hspace{0.2cm} W_3 = 2 \hspace{0.05cm}, \hspace{0.2cm}W_4 = 1 \hspace{0.3cm} \Rightarrow \hspace{0.3cm} W(X) = 1+ 2 \cdot X^{3} +X^{4} \hspace{0.05cm}.$$

- For the BEC channel, $\beta = \lambda$ also applies. From this follows as a final result for $\lambda = 0.001$:

- $${\rm Pr(Bhattacharyya)} = 2 \cdot \lambda^3 + \lambda^4 \hspace{0.15cm} \underline{= 2.1 \cdot 10^{-3}} \hspace{0.05cm}.$$

- Note that with the BEC model, the "Bhattacharyya Bound" is always twice as large as the "Union Bound", which is itself an upper bound on the block error probability.