Difference between revisions of "Aufgaben:Exercise 1.1: Entropy of the Weather"

m (Javier moved page Aufgabe 1.1: Wetterentropie to Exercise 1.1: Entropy of the Weather) |

|||

| Line 3: | Line 3: | ||

}} | }} | ||

| − | [[File:Inf_A_1_1_vers2.png|right|frame| | + | [[File:Inf_A_1_1_vers2.png|right|frame|Five different binary sources]] |

| − | + | A weather station queries different regions every day and receives a message $x$ back as a response in each case, namely | |

| − | * $x = \rm B$: | + | * $x = \rm B$: The weather is rather bad. |

| − | * $x = \rm G$: | + | * $x = \rm G$: The weather is rather good. |

| − | + | The data were stored in files over many years for different regions, so that the entropies of the $\rm B/G$–sequences can be determined: | |

:$$H = p_{\rm B} \cdot {\rm log}_2\hspace{0.1cm}\frac{1}{p_{\rm B}} + p_{\rm G} \cdot {\rm log}_2\hspace{0.1cm}\frac{1}{p_{\rm G}}$$ | :$$H = p_{\rm B} \cdot {\rm log}_2\hspace{0.1cm}\frac{1}{p_{\rm B}} + p_{\rm G} \cdot {\rm log}_2\hspace{0.1cm}\frac{1}{p_{\rm G}}$$ | ||

| − | + | with the <i>binary logarithm</i> | |

:$${\rm log}_2\hspace{0.1cm}p=\frac{{\rm lg}\hspace{0.1cm}p}{{\rm lg}\hspace{0.1cm}2}\hspace{0.3cm} \left ( = {\rm ld}\hspace{0.1cm}p \right ) \hspace{0.05cm}.$$ | :$${\rm log}_2\hspace{0.1cm}p=\frac{{\rm lg}\hspace{0.1cm}p}{{\rm lg}\hspace{0.1cm}2}\hspace{0.3cm} \left ( = {\rm ld}\hspace{0.1cm}p \right ) \hspace{0.05cm}.$$ | ||

| − | „lg” | + | Here, „lg” denotes the logarithm to the base $10$. It should also be mentioned that the pseudo-unit $\text{bit/query}$ must be added in each case. |

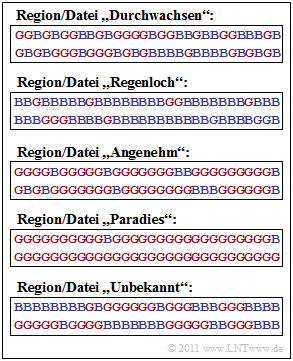

| − | + | The graph shows these binary sequences for $60$ days and the following regions: | |

| − | * Region „ | + | * Region „mixed”: $p_{\rm B} = p_{\rm G} =0.5$, |

| − | * Region „ | + | * Region „rainy”: $p_{\rm B} = 0.8, \; p_{\rm G} =0.2$, |

| − | * Region „ | + | * Region „pleasant”: $p_{\rm B} = 0.2, \; p_{\rm G} =0.8$, |

| − | * Region „ | + | * Region „paradise”: $p_{\rm B} = 1/30, \; p_{\rm G} =29/30$. |

| − | + | Finally, the file „Unknown” is also given, whose statistical properties are to be estimated. | |

| Line 34: | Line 34: | ||

| − | '' | + | ''Hinss:'' |

| − | * | + | *This task belongs to the chapter [[Information_Theory/Gedächtnislose_Nachrichtenquellen|Discrete Memoryless Sources]]. |

| − | * | + | *For the first four files it is assumed that the events $\rm B$ and $\rm G$ are statistically independent, a rather unrealistic assumption for weather practice. |

| − | * | + | *he task was designed at a time when [https://en.wikipedia.org/wiki/Greta_Thunberg Greta] was just starting school. We leave it to you to rename „paradise” to „hell”. |

| − | === | + | ===Questions=== |

<quiz display=simple> | <quiz display=simple> | ||

| − | { | + | {What is the entropy $H_{\rm D}$ of the file „mixed" ? |

|type="{}"} | |type="{}"} | ||

| − | $H_{\rm D}\ = \ $ { 1 3% } $\ \rm bit/ | + | $H_{\rm D}\ = \ $ { 1 3% } $\ \rm bit/query$ |

| − | { | + | {What is the entropy $H_{\rm R}$ of the file „rainy” ? |

|type="{}"} | |type="{}"} | ||

| − | $H_{\rm R}\ = \ $ { 0.722 3% } $\ \rm bit/ | + | $H_{\rm R}\ = \ $ { 0.722 3% } $\ \rm bit/query$ |

| − | { | + | {What is the entropy $H_{\rm A}$ of the file „pleasant” ? |

|type="{}"} | |type="{}"} | ||

| − | $H_{\rm A}\ = \ $ { 0.722 3% } $\ \rm bit/ | + | $H_{\rm A}\ = \ $ { 0.722 3% } $\ \rm bit/query$ |

| − | { | + | {How large are the information contents of events $\rm B$ and $\rm G$ in relation to the file „paradise”? |

|type="{}"} | |type="{}"} | ||

| − | $I_{\rm B}\ = \ $ { 4.907 3% } $\ \rm bit/ | + | $I_{\rm B}\ = \ $ { 4.907 3% } $\ \rm bit/query$ |

| − | $I_{\rm G}\ = \ $ { 0.049 3% } $\ \rm bit/ | + | $I_{\rm G}\ = \ $ { 0.049 3% } $\ \rm bit/query$ |

| − | { | + | {What is the entropy (that is: the average information content) $H_{\rm P}$ of the file „paradise”? Interpret the result. |

|type="{}"} | |type="{}"} | ||

| − | $H_{\rm P}\ = \ $ { 0.211 3% } $\ \rm bit/ | + | $H_{\rm P}\ = \ $ { 0.211 3% } $\ \rm bit/query$ |

| − | { | + | {Which statements could be true for the file „unknown” ? |

|type="[]"} | |type="[]"} | ||

| − | + | + | + Events $\rm B$ and $\rm G$ are approximately equally likely. |

| − | - | + | - The sequence elements are statistically independent of each other. |

| − | + | + | + The entropy of this file is $H_\text{U} \approx 0.7 \; \rm bit/query$. |

| − | - | + | - The entropy of this file is $H_\text{U} = 1.5 \; \rm bit/query$. |

| Line 84: | Line 84: | ||

</quiz> | </quiz> | ||

| − | === | + | ===Solution=== |

{{ML-Kopf}} | {{ML-Kopf}} | ||

'''(1)''' Bei der Datei „Durchwachsen” sind die beiden Wahrscheinlichkeiten gleich: $p_{\rm B} = p_{\rm G} =0.5$. Damit ergibt sich für die Entropie: | '''(1)''' Bei der Datei „Durchwachsen” sind die beiden Wahrscheinlichkeiten gleich: $p_{\rm B} = p_{\rm G} =0.5$. Damit ergibt sich für die Entropie: | ||

Revision as of 20:29, 4 May 2021

A weather station queries different regions every day and receives a message $x$ back as a response in each case, namely

- $x = \rm B$: The weather is rather bad.

- $x = \rm G$: The weather is rather good.

The data were stored in files over many years for different regions, so that the entropies of the $\rm B/G$–sequences can be determined:

- $$H = p_{\rm B} \cdot {\rm log}_2\hspace{0.1cm}\frac{1}{p_{\rm B}} + p_{\rm G} \cdot {\rm log}_2\hspace{0.1cm}\frac{1}{p_{\rm G}}$$

with the binary logarithm

- $${\rm log}_2\hspace{0.1cm}p=\frac{{\rm lg}\hspace{0.1cm}p}{{\rm lg}\hspace{0.1cm}2}\hspace{0.3cm} \left ( = {\rm ld}\hspace{0.1cm}p \right ) \hspace{0.05cm}.$$

Here, „lg” denotes the logarithm to the base $10$. It should also be mentioned that the pseudo-unit $\text{bit/query}$ must be added in each case.

The graph shows these binary sequences for $60$ days and the following regions:

- Region „mixed”: $p_{\rm B} = p_{\rm G} =0.5$,

- Region „rainy”: $p_{\rm B} = 0.8, \; p_{\rm G} =0.2$,

- Region „pleasant”: $p_{\rm B} = 0.2, \; p_{\rm G} =0.8$,

- Region „paradise”: $p_{\rm B} = 1/30, \; p_{\rm G} =29/30$.

Finally, the file „Unknown” is also given, whose statistical properties are to be estimated.

Hinss:

- This task belongs to the chapter Discrete Memoryless Sources.

- For the first four files it is assumed that the events $\rm B$ and $\rm G$ are statistically independent, a rather unrealistic assumption for weather practice.

- he task was designed at a time when Greta was just starting school. We leave it to you to rename „paradise” to „hell”.

Questions

Solution

- $$H_{\rm D} = 0.5 \cdot {\rm log}_2\hspace{0.1cm}\frac{1}{0.5} + 0.5 \cdot {\rm log}_2\hspace{0.1cm}\frac{1}{0.5} \hspace{0.15cm}\underline {= 1\,{\rm bit/Anfrage}}\hspace{0.05cm}.$$

(2) Mit $p_{\rm B} = 0.8$ und $p_{\rm G} =0.2$ erhält man einen kleineren Entropiewert:

- $$H_{\rm R} \hspace{-0.05cm}= \hspace{-0.05cm}0.8 \cdot {\rm log}_2\hspace{0.05cm}\frac{5}{4} \hspace{-0.05cm}+ \hspace{-0.05cm}0.2 \cdot {\rm log}_2\hspace{0.05cm}\frac{5}{1}\hspace{-0.05cm}=\hspace{-0.05cm} 0.8 \cdot{\rm log}_2\hspace{0.05cm}5\hspace{-0.05cm} - \hspace{-0.05cm}0.8 \cdot {\rm log}_2\hspace{0.05cm}4 \hspace{-0.05cm}+ \hspace{-0.05cm}0.2 \cdot {\rm log}_2 \hspace{0.05cm} 5 \hspace{-0.05cm}=\hspace{-0.05cm} {\rm log}_2\hspace{0.05cm}5\hspace{-0.05cm} -\hspace{-0.05cm} 0.8 \cdot {\rm log}_2\hspace{0.1cm}4\hspace{-0.05cm} = \hspace{-0.05cm} \frac{{\rm lg} \hspace{0.1cm}5}{{\rm lg}\hspace{0.1cm}2} \hspace{-0.05cm}-\hspace{-0.05cm} 1.6 \hspace{0.15cm} \underline {= 0.722\,{\rm bit/Anfrage}}\hspace{0.05cm}.$$

(3) In der Datei „Angenehm” sind die Wahrscheinlichkeiten gegenüber der Datei „Regenloch” genau vertauscht. Durch diese Vertauschung wird die Entropie jedoch nicht verändert:

- $$H_{\rm A} = H_{\rm R} \hspace{0.15cm} \underline {= 0.722\,{\rm bit/Anfrage}}\hspace{0.05cm}.$$

(4) Mit $p_{\rm B} = 1/30$ und $p_{\rm G} =29/30$ ergeben sich folgende Informationsgehalte:

- $$I_{\rm B} \hspace{0.1cm} = \hspace{0.1cm} {\rm log}_2\hspace{0.1cm}30 = \frac{{\rm lg}\hspace{0.1cm}30}{{\rm lg}\hspace{0.1cm}2} = \frac{1.477}{0.301} \hspace{0.15cm} \underline {= 4.907\,{\rm bit/Anfrage}}\hspace{0.05cm},$$

- $$I_{\rm G} \hspace{0.1cm} = \hspace{0.1cm} {\rm log}_2\hspace{0.1cm}\frac{30}{29} = \frac{{\rm lg}\hspace{0.1cm}1.034}{{\rm lg}\hspace{0.1cm}2} = \frac{1.477}{0.301} \hspace{0.15cm} \underline {= 0.049\,{\rm bit/Anfrage}}\hspace{0.05cm}.$$

(5) Die Entropie $H_{\rm P}$ ist der mittlere Informationsgehalt der beiden Ereignisse $\rm B$ und $\rm G$:

- $$H_{\rm P} = \frac{1}{30} \cdot 4.907 + \frac{29}{30} \cdot 0.049 = 0.164 + 0.047 \hspace{0.15cm} \underline {= 0.211\,{\rm bit/Anfrage}}\hspace{0.05cm}.$$

- Obwohl (genauer: weil) das Ereignis $\rm B$ seltener auftritt als $\rm G$, ist sein Beitrag zur Entropie größer.

(6) Richtig sind die Aussagen 1 und 3:

- $\rm B$ und $\rm G$ sind bei der Datei „Unbekannt” tatsächlich gleichwahrscheinlich: Die $60$ dargestellten Symbole teilen sich auf in $30$ mal $\rm B$ und $30$ mal $\rm G$.

- Es bestehen nun aber starke statistische Bindungen innerhalb der zeitlichen Folge. Nach längeren Schönwetterperioden folgen meist viele schlechte Tage am Stück.

- Aufgrund dieser statistischen Abhängigkeit innerhalb der $\rm B/G$–Folge ist $H_\text{U} = 0.722 \; \rm bit/Anfrage$ kleiner als $H_\text{D} = 1 \; \rm bit/Anfrage$.

- $H_\text{D}$ ist gleichzeitig das Maximum für $M = 2$ ⇒ die letzte Aussage ist mit Sicherheit falsch.