Difference between revisions of "Theory of Stochastic Signals/Moments of a Discrete Random Variable"

| Line 7: | Line 7: | ||

==Calculation as ensemble average or time average== | ==Calculation as ensemble average or time average== | ||

<br> | <br> | ||

| − | The probabilities and the relative frequencies provide extensive information about a discrete random variable. Reduced information is obtained by the so-called moments $m_k$, where $k$ represents a natural number. | + | The probabilities and the relative frequencies provide extensive information about a discrete random variable. |

| + | |||

| + | Reduced information is obtained by the so-called '''moments''' $m_k$, where $k$ represents a natural number. | ||

{{BlueBox|TEXT= | {{BlueBox|TEXT= | ||

$\text{Two alternative ways of calculation:}$ | $\text{Two alternative ways of calculation:}$ | ||

| − | Under the [[Theory_of_Stochastic_Signals/Autocorrelation_Function_(ACF)#Ergodic_random_processes|Ergodicity]] | + | Under the condition [[Theory_of_Stochastic_Signals/Autocorrelation_Function_(ACF)#Ergodic_random_processes|"Ergodicity"]] implicitly assumed here, there are two different calculation possibilities for the $k$-th order moment: |

| − | *the '''ensemble averaging | + | *the '''ensemble averaging''' or "expected value formation" ⇒ averaging over all possible values $\{ z_\mu\}$ with the index $\mu = 1 , \hspace{0.1cm}\text{ ...} \hspace{0.1cm} , M$: |

:$$m_k = {\rm E} \big[z^k \big] = \sum_{\mu = 1}^{M}p_\mu \cdot z_\mu^k \hspace{2cm} \rm with \hspace{0.1cm} {\rm E\big[\text{ ...} \big]\hspace{-0.1cm}:} \hspace{0.3cm} \rm expected\hspace{0.1cm}value ;$$ | :$$m_k = {\rm E} \big[z^k \big] = \sum_{\mu = 1}^{M}p_\mu \cdot z_\mu^k \hspace{2cm} \rm with \hspace{0.1cm} {\rm E\big[\text{ ...} \big]\hspace{-0.1cm}:} \hspace{0.3cm} \rm expected\hspace{0.1cm}value ;$$ | ||

*the '''time averaging''' over the random sequence $\langle z_ν\rangle$ with the index $ν = 1 , \hspace{0.1cm}\text{ ...} \hspace{0.1cm} , N$: | *the '''time averaging''' over the random sequence $\langle z_ν\rangle$ with the index $ν = 1 , \hspace{0.1cm}\text{ ...} \hspace{0.1cm} , N$: | ||

| Line 20: | Line 22: | ||

Note: | Note: | ||

| − | *Both types of calculations lead to the same asymptotic result for sufficiently large values of $N$ | + | *Both types of calculations lead to the same asymptotic result for sufficiently large values of $N$. |

| − | *For finite $N$ | + | *For finite $N$, a comparable error results as when the probability is approximated by the relative frequency. |

==Linear mean - DC component== | ==Linear mean - DC component== | ||

| Line 29: | Line 31: | ||

:$$m_1 =\sum_{\mu=1}^{M}p_\mu\cdot z_\mu =\lim_{N\to\infty}\frac{1}{N}\sum_{\nu=1}^{N}z_\nu.$$ | :$$m_1 =\sum_{\mu=1}^{M}p_\mu\cdot z_\mu =\lim_{N\to\infty}\frac{1}{N}\sum_{\nu=1}^{N}z_\nu.$$ | ||

*The left part of this equation describes the ensemble averaging (over all possible values), | *The left part of this equation describes the ensemble averaging (over all possible values), | ||

| − | + | :while the right equation gives the determination as time average. | |

| − | *In the context of signals, this quantity is also referred to as the [[Signal_Representation/Direct_Current_Signal_-_Limit_Case_of_a_Periodic_Signal| | + | *In the context of signals, this quantity is also referred to as the [[Signal_Representation/Direct_Current_Signal_-_Limit_Case_of_a_Periodic_Signal|"direct current"]] $\rm (DC)$ component.}} |

[[File:P_ID49__Sto_T_2_2_S2_neu.png|right|frame|DC component $m_1$ of a binary signal]] | [[File:P_ID49__Sto_T_2_2_S2_neu.png|right|frame|DC component $m_1$ of a binary signal]] | ||

{{GraueBox|TEXT= | {{GraueBox|TEXT= | ||

| − | $\text{Example 1:}$ A binary signal $x(t)$ with the two possible | + | $\text{Example 1:}$ A binary signal $x(t)$ with the two possible values |

*$1\hspace{0.03cm}\rm V$ $($for the symbol $\rm L)$, | *$1\hspace{0.03cm}\rm V$ $($for the symbol $\rm L)$, | ||

*$3\hspace{0.03cm}\rm V$ $($for the symbol $\rm H)$ | *$3\hspace{0.03cm}\rm V$ $($for the symbol $\rm H)$ | ||

| − | as well as the occurrence probabilities $p_{\rm L} = 0.2$ | + | as well as the occurrence probabilities $p_{\rm L} = 0.2$ and $p_{\rm H} = 0.8$ has the linear mean ("DC component") |

:$$m_1 = 0.2 \cdot 1\,{\rm V}+ 0.8 \cdot 3\,{\rm V}= 2.6 \,{\rm V}. $$ | :$$m_1 = 0.2 \cdot 1\,{\rm V}+ 0.8 \cdot 3\,{\rm V}= 2.6 \,{\rm V}. $$ | ||

This is drawn as a red line in the graph. | This is drawn as a red line in the graph. | ||

| − | + | ||

| − | If we determine this parameter by time averaging over the displayed $N = 12$ signal values, we obtain a slightly smaller value: | + | If we determine this parameter by time averaging over the displayed $N = 12$ signal values, we obtain a slightly smaller value: |

:$$m_1\hspace{0.01cm}' = 4/12 \cdot 1\,{\rm V}+ 8/12 \cdot 3\,{\rm V}= 2.33 \,{\rm V}. $$ | :$$m_1\hspace{0.01cm}' = 4/12 \cdot 1\,{\rm V}+ 8/12 \cdot 3\,{\rm V}= 2.33 \,{\rm V}. $$ | ||

| − | Here, the probabilities | + | *Here, the probabilities $p_{\rm L} = 0.2$ and $p_{\rm H} = 0.8$ were replaced by the corresponding frequencies $h_{\rm L} = 4/12$ and $h_{\rm H} = 8/12$ respectively. |

| + | *In this example the relative error due to insufficient sequence length $N$ is greater than $10\%$. | ||

| + | |||

| − | + | $\text{Note about our (admittedly somewhat unusual) nomenclature:}$ | |

| − | We denote binary symbols here as in circuit theory with $\rm L$ (Low) and $\rm H$ (High) to avoid confusion. | + | We denote binary symbols here as in circuit theory with $\rm L$ ("Low") and $\rm H$ ("High") to avoid confusion. |

| − | *In coding theory, it is useful to map $\{ \text{L, H}\}$ to $\{0, 1\}$ to take advantage of the possibilities of modulo algebra. | + | *In coding theory, it is useful to map $\{ \text{L, H}\}$ to $\{0, 1\}$ to take advantage of the possibilities of modulo algebra. |

| − | *In contrast, to describe modulation with bipolar (antipodal) signals, one better chooses the mapping $\{ \text{L, H}\}$ ⇔ $ \{-1, +1\}$. | + | *In contrast, to describe modulation with bipolar (antipodal) signals, one better chooses the mapping $\{ \text{L, H}\}$ ⇔ $ \{-1, +1\}$. |

}} | }} | ||

| − | ==Quadratic | + | ==Quadratic mean – variance – standard deviation== |

<br> | <br> | ||

{{BlaueBox|TEXT= | {{BlaueBox|TEXT= | ||

$\text{Definitions:}$ | $\text{Definitions:}$ | ||

| − | *Analogous to the linear mean, $k = 2$ is obtained for the '''root mean square''': | + | *Analogous to the linear mean, $k = 2$ is obtained for the '''root mean square''' (short: "rms"): |

:$$m_2 =\sum_{\mu=\rm 1}^{\it M}p_\mu\cdot z_\mu^2 =\lim_{N\to\infty}\frac{\rm 1}{\it N}\sum_{\nu=\rm 1}^{\it N}z_\nu^2.$$ | :$$m_2 =\sum_{\mu=\rm 1}^{\it M}p_\mu\cdot z_\mu^2 =\lim_{N\to\infty}\frac{\rm 1}{\it N}\sum_{\nu=\rm 1}^{\it N}z_\nu^2.$$ | ||

| − | *Together with the DC component $m_1$ | + | *Together with the DC component $m_1$, the '''variance''' $σ^2$ can be determined from this as a further parameter ("Steiner's theorem"): |

:$$\sigma^2=m_2-m_1^2.$$ | :$$\sigma^2=m_2-m_1^2.$$ | ||

| − | * | + | *The square root $σ$ of the variance is called "root mean square" ⇒ '''rms value''' (sometimes this quantity is also called the "standard deviation"): |

:$$\sigma=\sqrt{m_2-m_1^2}.$$}} | :$$\sigma=\sqrt{m_2-m_1^2}.$$}} | ||

| − | + | $\text{Notes on units:}$ | |

| − | *For message signals, $m_2$ indicates the (average) | + | :*For message signals, $m_2$ indicates the "(average) power" of a random signal, referenced to $1 \hspace{0.03cm} Ω$ resistance. |

| − | *If $z$ describes a voltage, $m_2$ accordingly has the unit${\rm V}^2$. | + | :*If $z$ describes a voltage, $m_2$ accordingly has the unit ${\rm V}^2$. |

| − | *The variance $σ^2$ | + | :*The variance $σ^2$ of a random signal corresponds physically to the "alternating power". |

| − | *These definitions are based on the reference resistance $1 \hspace{0.03cm} Ω$ | + | :*These definitions are based on the reference resistance $1 \hspace{0.03cm} Ω$. |

| − | The (German) learning video [[Momentenberechnung bei diskreten Zufallsgrößen (Lernvideo)|Momentenberechnung bei diskreten Zufallsgrößen]] | + | The following (German language) learning video illustrates the defined quantities using the example of a digital signal: <br> [[Momentenberechnung bei diskreten Zufallsgrößen (Lernvideo)|Momentenberechnung bei diskreten Zufallsgrößen]] ⇒ "Moment Calculation for Discrete Random Variables". |

| − | [[File:P_ID456__Sto_T_2_2_S3_neu.png | right|frame|Standard deviation of a binary | + | [[File:P_ID456__Sto_T_2_2_S3_neu.png | right|frame|Standard deviation ("rms value" of a binary signal]] |

{{GraueBox|TEXT= | {{GraueBox|TEXT= | ||

| − | $\text{Example 2:}$ | + | $\text{Example 2:}$ A binary signal $x(t)$ with the two possible values |

| − | A binary signal $x(t)$ with the | ||

*$1\hspace{0.03cm}\rm V$ $($for the symbol $\rm L)$, | *$1\hspace{0.03cm}\rm V$ $($for the symbol $\rm L)$, | ||

*$3\hspace{0.03cm}\rm V$ $($for the symbol $\rm H)$ | *$3\hspace{0.03cm}\rm V$ $($for the symbol $\rm H)$ | ||

| − | + | as well as the occurrence probabilities $p_{\rm L} = 0.2$ and $p_{\rm H} = 0.8$ has the total signal power | |

| − | :$$P_{\rm | + | :$$P_{\rm total} = 0.2 \cdot (1\,{\rm V})^2+ 0.8 \cdot (3\,{\rm V})^2 = 7.4 \hspace{0.05cm}{\rm V}^2,$$ |

if one assumes the reference resistance $R = 1 \hspace{0.05cm} Ω$ . | if one assumes the reference resistance $R = 1 \hspace{0.05cm} Ω$ . | ||

| − | With the DC component $m_1 = 2.6 \hspace{0.05cm}\rm V$ $($see [[Theory_of_Stochastic_Signals/Momente_einer_diskreten_Zufallsgröße#Linear_mean_-_DC_component|$\text{ | + | With the DC component $m_1 = 2.6 \hspace{0.05cm}\rm V$ $($see [[Theory_of_Stochastic_Signals/Momente_einer_diskreten_Zufallsgröße#Linear_mean_-_DC_component|$\text{Example 1})$]] it follows for |

| − | *the | + | *the variance $σ^2 = 7.4 \hspace{0.05cm}{\rm V}^2 - \big [2.6 \hspace{0.05cm}\rm V\big ]^2 = 0.64\hspace{0.05cm} {\rm V}^2$, |

| + | *the alternating power $P_{\rm alter} = 0.64\hspace{0.05cm} {\rm W}$ ⇒ same numerical value as $σ^2$, but different unit, | ||

*the rms value $s_{\rm eff} = σ = 0.8 \hspace{0.05cm} \rm V$. | *the rms value $s_{\rm eff} = σ = 0.8 \hspace{0.05cm} \rm V$. | ||

| − | :: | + | ::Insertion: With other reference resistance ⇒ $R \ne 1 \hspace{0.1cm} Ω$, not all these calculations apply. For example, with $R = 50 \hspace{0.1cm} Ω$, the power $P_{\rm total} $, the alternating power $P_{\rm alter}$, and the rms value $s_{\rm eff}$ have the following physical values: |

| − | ::::$$P_{\rm | + | ::::$$P_{\rm total} \hspace{-0.05cm}= \hspace{-0.05cm} \frac{m_2}{R} \hspace{-0.05cm}= \hspace{-0.05cm} \frac{7.4\,{\rm V}^2}{50\,{\rm \Omega} } \hspace{-0.05cm}= \hspace{-0.05cm}0.148\,{\rm W},\hspace{0.5cm} |

| − | P_{\rm | + | P_{\rm alter} \hspace{-0.05cm} = \hspace{-0.05cm} \frac{\sigma^2}{R} \hspace{-0.05cm}= \hspace{-0.05cm}12.8\,{\rm mW} \hspace{0.05cm},\hspace{0.5cm} |

s_{\rm eff} \hspace{-0.05cm} = \hspace{-0.05cm}\sqrt{R \cdot P_{\rm W} } \hspace{-0.05cm}= \hspace{-0.05cm} \sigma \hspace{-0.05cm}= \hspace{-0.05cm} 0.8\,{\rm V}.$$ | s_{\rm eff} \hspace{-0.05cm} = \hspace{-0.05cm}\sqrt{R \cdot P_{\rm W} } \hspace{-0.05cm}= \hspace{-0.05cm} \sigma \hspace{-0.05cm}= \hspace{-0.05cm} 0.8\,{\rm V}.$$ | ||

| − | The same variance and rms value $s_{\rm eff}$ are obtained for amplitudes $0\hspace{0.05cm}\rm V$ $($for symbol $\rm L)$ and $2\hspace{0.05cm}\rm V$ $($for symbol $\rm H)$ | + | The same variance $σ^2 = 0.64\hspace{0.05cm} {\rm V}^2$ and the same rms value $s_{\rm eff}= 0.8 \hspace{0.05cm} \rm V$ are obtained for amplitudes $0\hspace{0.05cm}\rm V$ $($for symbol $\rm L)$ and $2\hspace{0.05cm}\rm V$ $($for symbol $\rm H)$, provided that the probabilities $p_{\rm L} = 0.2$ and $p_{\rm H} = 0.8$ remain the same. Only the DC component and the total power change: |

| − | :$$m_1 = 1.6 \hspace{0.05cm}{\rm V}, \hspace{0.5cm}P_{\rm | + | :$$m_1 = 1.6 \hspace{0.05cm}{\rm V}, \hspace{0.5cm}P_{\rm total} = {m_1}^2 +\sigma^2 = 3.2 \hspace{0.05cm}{\rm V}^2.$$ |

}} | }} | ||

Revision as of 17:31, 6 December 2021

Contents

Calculation as ensemble average or time average

The probabilities and the relative frequencies provide extensive information about a discrete random variable.

Reduced information is obtained by the so-called moments $m_k$, where $k$ represents a natural number.

$\text{Two alternative ways of calculation:}$

Under the condition "Ergodicity" implicitly assumed here, there are two different calculation possibilities for the $k$-th order moment:

- the ensemble averaging or "expected value formation" ⇒ averaging over all possible values $\{ z_\mu\}$ with the index $\mu = 1 , \hspace{0.1cm}\text{ ...} \hspace{0.1cm} , M$:

- $$m_k = {\rm E} \big[z^k \big] = \sum_{\mu = 1}^{M}p_\mu \cdot z_\mu^k \hspace{2cm} \rm with \hspace{0.1cm} {\rm E\big[\text{ ...} \big]\hspace{-0.1cm}:} \hspace{0.3cm} \rm expected\hspace{0.1cm}value ;$$

- the time averaging over the random sequence $\langle z_ν\rangle$ with the index $ν = 1 , \hspace{0.1cm}\text{ ...} \hspace{0.1cm} , N$:

- $$m_k=\overline{z_\nu^k}=\hspace{0.01cm}\lim_{N\to\infty}\frac{1}{N}\sum_{\nu=\rm 1}^{\it N}z_\nu^k\hspace{1.7cm}\rm with\hspace{0.1cm}horizontal\hspace{0.1cm}line\hspace{-0.1cm}:\hspace{0.1cm}time\hspace{0.1cm}average.$$

Note:

- Both types of calculations lead to the same asymptotic result for sufficiently large values of $N$.

- For finite $N$, a comparable error results as when the probability is approximated by the relative frequency.

Linear mean - DC component

$\text{Definition:}$ With $k = 1$ we obtain from the general equation for moments the linear mean:

- $$m_1 =\sum_{\mu=1}^{M}p_\mu\cdot z_\mu =\lim_{N\to\infty}\frac{1}{N}\sum_{\nu=1}^{N}z_\nu.$$

- The left part of this equation describes the ensemble averaging (over all possible values),

- while the right equation gives the determination as time average.

- In the context of signals, this quantity is also referred to as the "direct current" $\rm (DC)$ component.

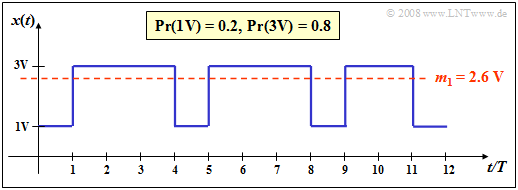

$\text{Example 1:}$ A binary signal $x(t)$ with the two possible values

- $1\hspace{0.03cm}\rm V$ $($for the symbol $\rm L)$,

- $3\hspace{0.03cm}\rm V$ $($for the symbol $\rm H)$

as well as the occurrence probabilities $p_{\rm L} = 0.2$ and $p_{\rm H} = 0.8$ has the linear mean ("DC component")

- $$m_1 = 0.2 \cdot 1\,{\rm V}+ 0.8 \cdot 3\,{\rm V}= 2.6 \,{\rm V}. $$

This is drawn as a red line in the graph.

If we determine this parameter by time averaging over the displayed $N = 12$ signal values, we obtain a slightly smaller value:

- $$m_1\hspace{0.01cm}' = 4/12 \cdot 1\,{\rm V}+ 8/12 \cdot 3\,{\rm V}= 2.33 \,{\rm V}. $$

- Here, the probabilities $p_{\rm L} = 0.2$ and $p_{\rm H} = 0.8$ were replaced by the corresponding frequencies $h_{\rm L} = 4/12$ and $h_{\rm H} = 8/12$ respectively.

- In this example the relative error due to insufficient sequence length $N$ is greater than $10\%$.

$\text{Note about our (admittedly somewhat unusual) nomenclature:}$

We denote binary symbols here as in circuit theory with $\rm L$ ("Low") and $\rm H$ ("High") to avoid confusion.

- In coding theory, it is useful to map $\{ \text{L, H}\}$ to $\{0, 1\}$ to take advantage of the possibilities of modulo algebra.

- In contrast, to describe modulation with bipolar (antipodal) signals, one better chooses the mapping $\{ \text{L, H}\}$ ⇔ $ \{-1, +1\}$.

Quadratic mean – variance – standard deviation

$\text{Definitions:}$

- Analogous to the linear mean, $k = 2$ is obtained for the root mean square (short: "rms"):

- $$m_2 =\sum_{\mu=\rm 1}^{\it M}p_\mu\cdot z_\mu^2 =\lim_{N\to\infty}\frac{\rm 1}{\it N}\sum_{\nu=\rm 1}^{\it N}z_\nu^2.$$

- Together with the DC component $m_1$, the variance $σ^2$ can be determined from this as a further parameter ("Steiner's theorem"):

- $$\sigma^2=m_2-m_1^2.$$

- The square root $σ$ of the variance is called "root mean square" ⇒ rms value (sometimes this quantity is also called the "standard deviation"):

- $$\sigma=\sqrt{m_2-m_1^2}.$$

$\text{Notes on units:}$

- For message signals, $m_2$ indicates the "(average) power" of a random signal, referenced to $1 \hspace{0.03cm} Ω$ resistance.

- If $z$ describes a voltage, $m_2$ accordingly has the unit ${\rm V}^2$.

- The variance $σ^2$ of a random signal corresponds physically to the "alternating power".

- These definitions are based on the reference resistance $1 \hspace{0.03cm} Ω$.

The following (German language) learning video illustrates the defined quantities using the example of a digital signal:

Momentenberechnung bei diskreten Zufallsgrößen ⇒ "Moment Calculation for Discrete Random Variables".

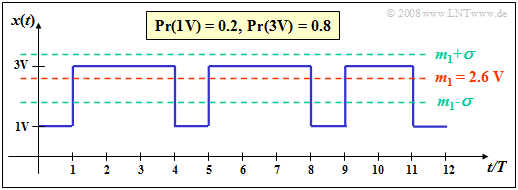

$\text{Example 2:}$ A binary signal $x(t)$ with the two possible values

- $1\hspace{0.03cm}\rm V$ $($for the symbol $\rm L)$,

- $3\hspace{0.03cm}\rm V$ $($for the symbol $\rm H)$

as well as the occurrence probabilities $p_{\rm L} = 0.2$ and $p_{\rm H} = 0.8$ has the total signal power

- $$P_{\rm total} = 0.2 \cdot (1\,{\rm V})^2+ 0.8 \cdot (3\,{\rm V})^2 = 7.4 \hspace{0.05cm}{\rm V}^2,$$

if one assumes the reference resistance $R = 1 \hspace{0.05cm} Ω$ .

With the DC component $m_1 = 2.6 \hspace{0.05cm}\rm V$ $($see $\text{Example 1})$ it follows for

- the variance $σ^2 = 7.4 \hspace{0.05cm}{\rm V}^2 - \big [2.6 \hspace{0.05cm}\rm V\big ]^2 = 0.64\hspace{0.05cm} {\rm V}^2$,

- the alternating power $P_{\rm alter} = 0.64\hspace{0.05cm} {\rm W}$ ⇒ same numerical value as $σ^2$, but different unit,

- the rms value $s_{\rm eff} = σ = 0.8 \hspace{0.05cm} \rm V$.

- Insertion: With other reference resistance ⇒ $R \ne 1 \hspace{0.1cm} Ω$, not all these calculations apply. For example, with $R = 50 \hspace{0.1cm} Ω$, the power $P_{\rm total} $, the alternating power $P_{\rm alter}$, and the rms value $s_{\rm eff}$ have the following physical values:

- $$P_{\rm total} \hspace{-0.05cm}= \hspace{-0.05cm} \frac{m_2}{R} \hspace{-0.05cm}= \hspace{-0.05cm} \frac{7.4\,{\rm V}^2}{50\,{\rm \Omega} } \hspace{-0.05cm}= \hspace{-0.05cm}0.148\,{\rm W},\hspace{0.5cm} P_{\rm alter} \hspace{-0.05cm} = \hspace{-0.05cm} \frac{\sigma^2}{R} \hspace{-0.05cm}= \hspace{-0.05cm}12.8\,{\rm mW} \hspace{0.05cm},\hspace{0.5cm} s_{\rm eff} \hspace{-0.05cm} = \hspace{-0.05cm}\sqrt{R \cdot P_{\rm W} } \hspace{-0.05cm}= \hspace{-0.05cm} \sigma \hspace{-0.05cm}= \hspace{-0.05cm} 0.8\,{\rm V}.$$

- Insertion: With other reference resistance ⇒ $R \ne 1 \hspace{0.1cm} Ω$, not all these calculations apply. For example, with $R = 50 \hspace{0.1cm} Ω$, the power $P_{\rm total} $, the alternating power $P_{\rm alter}$, and the rms value $s_{\rm eff}$ have the following physical values:

The same variance $σ^2 = 0.64\hspace{0.05cm} {\rm V}^2$ and the same rms value $s_{\rm eff}= 0.8 \hspace{0.05cm} \rm V$ are obtained for amplitudes $0\hspace{0.05cm}\rm V$ $($for symbol $\rm L)$ and $2\hspace{0.05cm}\rm V$ $($for symbol $\rm H)$, provided that the probabilities $p_{\rm L} = 0.2$ and $p_{\rm H} = 0.8$ remain the same. Only the DC component and the total power change:

- $$m_1 = 1.6 \hspace{0.05cm}{\rm V}, \hspace{0.5cm}P_{\rm total} = {m_1}^2 +\sigma^2 = 3.2 \hspace{0.05cm}{\rm V}^2.$$

Exercises for the chapter

Exercise 2.2: Multi-Level Signals

Exercise 2.2Z: Discrete Random Variables