Difference between revisions of "Aufgaben:Exercise 1.15: Distance Spectra of HC (7, 4, 3) and HC (8, 4, 4)"

| Line 1: | Line 1: | ||

{{quiz-Header|Buchseite=Channel_Coding/Limits_for_Block_Error_Probability}} | {{quiz-Header|Buchseite=Channel_Coding/Limits_for_Block_Error_Probability}} | ||

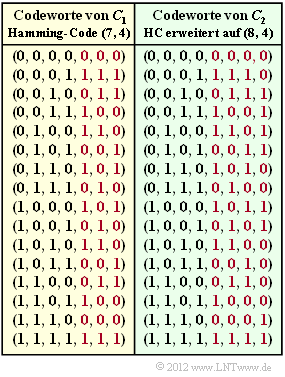

| − | [[File:P_ID2407__KC_A_1_9.png|right|frame| | + | [[File:P_ID2407__KC_A_1_9.png|right|frame|Code tables of the $(7, 4, 3)$ Hamming code and the $(8, 4, 4)$ extension.]] |

| − | + | We consider as in the [[Aufgaben:Exercise_1.09:_Extended_Hamming_Code|Task 1.9]] | |

| − | * | + | *the $(7, 4, 3)$ Hamming code and. |

| − | * | + | *the extended $(8, 4, 4)$ Hamming code. |

| − | + | The graphic shows the corresponding code tables. In the [[Aufgaben:Exercise_1.12:_Hard_Decision_vs._Soft_Decision|Exercise 1.12]] the syndrome decoding of these two codes has already been covered. In this exercise, the differences regarding the distance spectrum $\{W_{i}\}$ shall now be worked out. For the running variable $i = 0, \ \text{...} \ , n$: | |

| − | |||

| − | |||

| − | |||

| − | |||

| + | *The integer $W_{i}$ specifies the number of codewords $\underline{x}$ with the [[Channel_Coding/Objective_of_Channel_Coding#Important_definitions_for_block_coding|Hamming weight]] $\underline{w}_{\rm H}( \underline{x} ) = i$. | ||

| + | *For the linear code considered here, $W_{i}$ simultaneously describes the number of codewords with the [[Channel_Coding/Limits_for_Block_Error_Probability#Distance_spectrum_of_a_linear_code|Hamming distance]] $i$ from the all-zero word. | ||

| + | *Often one assigns to the number set $\{W_i\}$ a pseudo-function called [[Channel_Coding/Limits_for_Block_Error_Probability#Distance_spectrum_of_a_linear_code|weight enumerator function]] : | ||

:$$\left \{ \hspace{0.05cm} W_i \hspace{0.05cm} \right \} \hspace{0.3cm} \Leftrightarrow \hspace{0.3cm} W(X) = \sum_{i=0 }^{n} W_i \cdot X^{i} = W_0 + W_1 \cdot X + W_2 \cdot X^{2} + ... \hspace{0.05cm} + W_n \cdot X^{n}\hspace{0.05cm}.$$ | :$$\left \{ \hspace{0.05cm} W_i \hspace{0.05cm} \right \} \hspace{0.3cm} \Leftrightarrow \hspace{0.3cm} W(X) = \sum_{i=0 }^{n} W_i \cdot X^{i} = W_0 + W_1 \cdot X + W_2 \cdot X^{2} + ... \hspace{0.05cm} + W_n \cdot X^{n}\hspace{0.05cm}.$$ | ||

| − | Bhattacharyya | + | Bhattacharyya has used the pseudo-function $W(X)$ to specify a channel-independent (upper) bound on the block error probability: |

| − | :$${\rm Pr( | + | :$${\rm Pr(block\:error)} \le{\rm Pr(Bhattacharyya)} = W(\beta) -1 \hspace{0.05cm}.$$ |

| − | + | The so-called ''Bhattacharyya parameter'' is given as follows: | |

| − | :$$\beta = \left\{ \begin{array}{c} \lambda \\ \\ 2 \cdot \sqrt{\varepsilon \cdot (1- \varepsilon)}\\ \\ {\rm e}^{- R \hspace{0.05cm}\cdot \hspace{0.05cm}E_{\rm B}/N_0} \end{array} \right.\quad \begin{array}{*{1}c} {\rm | + | :$$\beta = \left\{ \begin{array}{c} \lambda \\ \\ 2 \cdot \sqrt{\varepsilon \cdot (1- \varepsilon)}\\ \\ {\rm e}^{- R \hspace{0.05cm}\cdot \hspace{0.05cm}E_{\rm B}/N_0} \end{array} \right.\quad \begin{array}{*{1}c} {\rm for\hspace{0.15cm} the \hspace{0.15cm}BEC\:model},\\ \\ {\rm for\hspace{0.15cm} the \hspace{0.15cm}BSC\:model}, \\ \\{\rm for\hspace{0.15cm} the \hspace{0.15cm}AWGN\:model}. \end{array}$$ |

| − | + | It should be noted that the Bhattacharyya bound is generally very pessimistic. The actual block error probability is often significantly lower. | |

| Line 32: | Line 31: | ||

| − | + | Hints: | |

| − | * | + | *This exercise refers to the chapter [[Channel_Coding/Limits_for_Block_Error_Probability|Bounds for Block Error Probability]]. |

| − | * | + | *A similar topic is covered in [[Aufgaben:Exercise_1.14:_Bhattacharyya_Bound_for_BEC|Task 1.14]] and in [[Aufgaben:Exercise_1.16:_Block_Error_Probability_Bounds_for_AWGN|Task 1.16]] . |

| − | * | + | * The channels to be considered are: |

| − | ** | + | ** the [[Channel_Coding/Channel_Models_and_Decision_Structures#Binary_Symmetric_Channel_.E2.80.93_BSC|BSC model]] (''Binary Symmetric Channel''), |

| − | ** | + | ** the [[Channel_Coding/Channel_Models_and_Decision_Structures#Binary_Erasure_Channel_.E2.80.93_BEC|BEC model]] (''Binary Erasure Channel''), |

| − | ** | + | ** the [[Channel_Coding/Channel_Models_and_Decision_Structures#AWGN_channel_at_Binary_Input|AWGN channel model]]. |

| Line 45: | Line 44: | ||

| − | === | + | ===Questions=== |

<quiz display=simple> | <quiz display=simple> | ||

{Geben Sie das Distanzspektrum des $(7, 4, 3)$–Hamming–Codes an. | {Geben Sie das Distanzspektrum des $(7, 4, 3)$–Hamming–Codes an. | ||

Revision as of 22:55, 29 July 2022

We consider as in the Task 1.9

- the $(7, 4, 3)$ Hamming code and.

- the extended $(8, 4, 4)$ Hamming code.

The graphic shows the corresponding code tables. In the Exercise 1.12 the syndrome decoding of these two codes has already been covered. In this exercise, the differences regarding the distance spectrum $\{W_{i}\}$ shall now be worked out. For the running variable $i = 0, \ \text{...} \ , n$:

- The integer $W_{i}$ specifies the number of codewords $\underline{x}$ with the Hamming weight $\underline{w}_{\rm H}( \underline{x} ) = i$.

- For the linear code considered here, $W_{i}$ simultaneously describes the number of codewords with the Hamming distance $i$ from the all-zero word.

- Often one assigns to the number set $\{W_i\}$ a pseudo-function called weight enumerator function :

- $$\left \{ \hspace{0.05cm} W_i \hspace{0.05cm} \right \} \hspace{0.3cm} \Leftrightarrow \hspace{0.3cm} W(X) = \sum_{i=0 }^{n} W_i \cdot X^{i} = W_0 + W_1 \cdot X + W_2 \cdot X^{2} + ... \hspace{0.05cm} + W_n \cdot X^{n}\hspace{0.05cm}.$$

Bhattacharyya has used the pseudo-function $W(X)$ to specify a channel-independent (upper) bound on the block error probability:

- $${\rm Pr(block\:error)} \le{\rm Pr(Bhattacharyya)} = W(\beta) -1 \hspace{0.05cm}.$$

The so-called Bhattacharyya parameter is given as follows:

- $$\beta = \left\{ \begin{array}{c} \lambda \\ \\ 2 \cdot \sqrt{\varepsilon \cdot (1- \varepsilon)}\\ \\ {\rm e}^{- R \hspace{0.05cm}\cdot \hspace{0.05cm}E_{\rm B}/N_0} \end{array} \right.\quad \begin{array}{*{1}c} {\rm for\hspace{0.15cm} the \hspace{0.15cm}BEC\:model},\\ \\ {\rm for\hspace{0.15cm} the \hspace{0.15cm}BSC\:model}, \\ \\{\rm for\hspace{0.15cm} the \hspace{0.15cm}AWGN\:model}. \end{array}$$

It should be noted that the Bhattacharyya bound is generally very pessimistic. The actual block error probability is often significantly lower.

Hints:

- This exercise refers to the chapter Bounds for Block Error Probability.

- A similar topic is covered in Task 1.14 and in Task 1.16 .

- The channels to be considered are:

- the BSC model (Binary Symmetric Channel),

- the BEC model (Binary Erasure Channel),

- the AWGN channel model.

Questions

Musterlösung

- $W_{0} \ \underline{ = \ 1}$ Codewort keine Eins beinhaltet (das Nullwort),

- $W_{3} \ \underline{ = \ 7}$ Codeworte drei Einsen beinhalten,

- $W_{4} \ \underline{ = \ 7}$ Codeworte vier Einsen beinhalten,

- $W_{7} \ \underline{ = \ 1}$ Codewort nur aus Einsen besteht.

$W_{i}$ gibt gleichzeitig die Anzahl der Codeworte an, die sich vom Nullwort in $i \ \rm Bit$ unterscheiden.

(2) Die Bhattacharyya–Schranke lautet:

- $${\rm Pr(Blockfehler)} \le{\rm Pr(Bhattacharyya)} = W(\beta) -1 \hspace{0.05cm}.$$

- Die Gewichtsfunktion ist durch die Teilaufgabe (1) festgelegt:

- $$W(X) = 1+ 7 \cdot X^{3} + 7 \cdot X^{4} + X^{7}\hspace{0.3cm} \Rightarrow \hspace{0.3cm} {\rm Pr(Bhattacharyya)} = 7 \cdot \beta^{3} + 7 \cdot \beta^{4} + \beta^{7} \hspace{0.05cm}.$$

- Für den Bhattacharyya–Parameter des BSC–Modells gilt:

- $$\beta = 2 \cdot \sqrt{\varepsilon \cdot (1- \varepsilon)} = 2 \cdot \sqrt{0.01 \cdot 0.99} = 0.199\hspace{0.3cm} \Rightarrow \hspace{0.3cm} {\rm Pr(Bhattacharyya)} = 7 \cdot 0.199^{3} + 7 \cdot 0.199^{4} + 0.199^{7} \hspace{0.15cm} \underline{ \approx 6.6\%} \hspace{0.05cm}.$$

- Ein Vergleich mit der tatsächlichen Blockfehlerwahrscheinlichkeit, wie in Aufgabe 1.12 berechnet,

- $${\rm Pr(Blockfehler)} \approx 21 \cdot \varepsilon^2 = 2.1 \cdot 10^{-3} \hspace{0.05cm},$$

- zeigt, dass Bhattacharyya nur eine grobe Schranke bereitstellt. Im vorliegenden Fall liegt diese Schranke um mehr als den Faktor $30$ über dem tatsächlichen Wert.

(3) Aus der Codetabelle des $(8, 4, 4)$–Codes erhält man folgende Ergebnisse:

- $$W(X) = 1+ 14 \cdot X^{4} + X^{8}\hspace{0.3cm} \Rightarrow \hspace{0.3cm} {\rm Pr(Bhattacharyya)} = 14 \cdot \beta^{4} + \beta^{8} = 14 \cdot 0.199^{4} + 0.199^{8} \hspace{0.15cm} \underline{ \approx 2.2\%} \hspace{0.05cm}.$$

(4) Die Gleichung für den Bhattacharyya–Parameter lautet:

- $$\beta = \left\{ \begin{array}{c} \lambda \\ \\ 2 \cdot \sqrt{ \varepsilon \cdot (1- \varepsilon)}\\ \\ {\rm e}^{- R \cdot E_{\rm B}/N_0} \end{array} \right.\quad \begin{array}{*{1}c} {\rm f\ddot{u}r\hspace{0.15cm} das \hspace{0.15cm}BEC-Modell},\\ \\ {\rm f\ddot{u}r\hspace{0.15cm} das \hspace{0.15cm}BSC-Modell}, \\ \\{\rm f\ddot{u}r\hspace{0.15cm} das \hspace{0.15cm}AWGN-Modell}. \end{array}$$

Mit dem BEC–Modell ergibt sich genau die gleiche Schranke, wenn die Auslöschungswahrscheinlichkeit $\lambda = \beta \ \underline{= 0.199}$ beträgt.

(5) Entsprechend obiger Gleichung muss gelten:

- $$\beta = {\rm e}^{- R \hspace{0.05cm}\cdot \hspace{0.05cm} E_{\rm B}/N_0} = 0.199 \hspace{0.3cm} \Rightarrow \hspace{0.3cm} R \cdot E_{\rm B}/N_0 = 10^{0.199} = 1.58 \hspace{0.05cm}.$$

- Die Coderate des erweiterten $(8, 4, 4)$–Hamming–Codes beträgt $R = 0.5$:

- $$E_{\rm B}/N_0 = 3.16 \hspace{0.3cm} \Rightarrow \hspace{0.3cm} 10 \cdot {\rm lg} \hspace{0.1cm} E_{\rm B}/N_0 \hspace{0.15cm} \underline{\approx 5\,{\rm dB}} \hspace{0.05cm}.$$

(6) Mit der Coderate $R = 4/7$ des $(7, 4, 3)$–Hamming–Codes erhält man:

- $$E_{\rm B}/N_0 = 7/4 \cdot 1.58 = 2.765 \hspace{0.3cm} \Rightarrow \hspace{0.3cm} 10 \cdot {\rm lg} \hspace{0.1cm} E_{\rm B}/N_0 \hspace{0.15cm} \underline{\approx 4.417\,{\rm dB}} \hspace{0.05cm}.$$