Contents

- 1 # OVERVIEW OF THE FIFTH MAIN CHAPTER #

- 2 Application of analog channel models

- 3 Definition of digital channel models

- 4 Example application of digital channel models

- 5 Error sequence and average error probability

- 6 Error correlation function

- 7 Relationship between error sequence and error distance

- 8 Error distance distribution

- 9 Exercises for the chapter

# OVERVIEW OF THE FIFTH MAIN CHAPTER #

At the end of this book, digital channel models are discussed

- which do not describe the transmission behavior of a digital transmission system in great detail according to the individual system components,

- but rather globally on the basis of typical error structures.

Such channel models are mainly used for »cascaded transmission systems« for the inner block, if the performance of the outer system components $($e.g. encoder and decoder$)$ is to be determined by simulation.

The following are dealt with in detail:

- The descriptive quantities »error correlation function« and »error distance distribution«,

- the »BSC model« ("Binary Symmetric Channel") for the description of statistically independent errors,

- the »burst error channel models« according to Gilbert-Elliott and McCullough,

- the »Wilhelm channel model« for the formulaic approximation of measured error curves,

- some notes on the »generation of error sequences«, for example with respect to »error distance simulation«,

- the effects of different error structures on »BMP files« $($for images$)$ and »WAV files« $($for audios$)$.

Note: All BMP images and WAV audios of this chapter were generated with

- the (German language) Windows program "Digital Channel Models & Multimedia"

- from the (former) practical course "Simulation of Digital Transmission Systems" at the Chair of Communications Engineering of the TU Munich.

Application of analog channel models

For investigations of message transmission systems, suitable channel models are of great importance, because they are the

- prerequisite for »system simulation and optimization,« as well as

- creating consistent and reconstructible boundary conditions.

For digital signal transmission, there are both analog and digital channel models:

- Although an analog channel model does not have to reproduce the transmission channel in all physical details,

it should describe its transmission behavior, including the dominant noise variables, with sufficient functional accuracy.

- In most cases, a compromise must be found between "mathematical manageability" and the "relationship to reality".

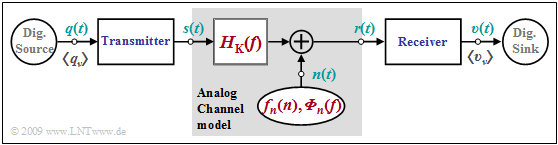

$\text{Example 1:}$ The graphic shows an "analog channel model" within a "digital transmission system". This contains

- the "channel frequency response" ⇒ $H_{\rm K}(f)$ to describe the linear distortions, and

- an additive noise signal $n(t)$, characterized by

- the "probability density function" $\rm (PDF)$ ⇒ $f_n(n)$, and

- the "power-spectral density" $\rm (PSD)$ ⇒ ${\it \Phi}_n(f)$.

A special case of this model is the so-called "AWGN channel" ("Additive White Gaussian Noise") with the system properties

- $$H_{\rm K}(f) = 1\hspace{0.05cm},$$

- $${f}_{n}(n) = \frac{1}{\sqrt{2 \pi} \cdot \sigma} \cdot {\rm e}^{-n^2\hspace{-0.05cm}/(2 \sigma^2)}\hspace{0.05cm},$$

- $${\it \Phi}_{n}(f) = {\rm const.}\hspace{0.05cm}.$$

This simple model is suitable, for example, for describing a radio channel with time-invariant behavior, where the model is abstracted such that

- the actual band-pass channel is described in the "equivalent low-pass range", and

- the attenuation, which depends on the frequency band and the transmission path length, is offset against the variance $\sigma^2$ of the noise signal $n(t)$.

To take time-variant characteristics into account, one must use other models, which are described in the book "Mobile communications" such as

For wired transmission systems, the specific frequency response of the transmission medium according to the specifications for

in the book "Linear Time-Invariant Systems" must be taken into account in particular, but also the fact that white noise can no longer be assumed due to "extraneous noise" (crosstalk, electromagnetic fields, etc.).

In the case of optical systems, the multiplicatively acting, i.e. signal-dependent "shot noise" must also be suitably incorporated into the analog channel model.

Definition of digital channel models

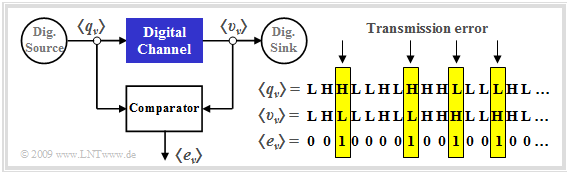

An analog channel model is characterized by analog input and output variables. In contrast, in a "digital channel model" $($sometimes referred to as "discrete"$)$, both the input and the output are discrete in time and value.

In the following, let these be

- the "source symbol sequence" $\langle q_\nu \rangle$ with $ q_\nu \in \{\rm L, \ H\}$ and

- the sink symbol sequence $ \langle v_\nu \rangle$ with $ v_\nu \in \{\rm L, \ H\}$.

The indexing variable $\nu$ can take values between $1$ and $N$.

As a comparison with the block diagram in "$\text{Example 1}$" shows:

- The "digital channel" is a simplifying model of the analog transmission channel including the technical transmission and reception units.

- Simplifying because this model only refers to the occurring transmission errors, represented by the error sequence $ \langle e_\nu \rangle$ with

- \[e_{\nu} = \left\{ \begin{array}{c} 1 \\ 0 \end{array} \right.\quad \begin{array}{*{1}c} {\rm if}\hspace{0.15cm}\upsilon_\nu \ne q_\nu \hspace{0.05cm}, \\ {\rm if}\hspace{0.15cm} \upsilon_\nu = q_\nu \hspace{0.05cm}.\\ \end{array}\]

- While $\rm L$ and $\rm H$ denote the possible symbols, which here stand for "Low" and "High", $ e_\nu \in \{\rm 0, \ 1\}$ is a real number value.

Note: Often the symbols are also defined as $ q_\nu \in \{\rm 0, \ 1\}$ and $ v_\nu \in \{\rm 0, \ 1\}$. To avoid confusion, we have used the somewhat unusual nomenclature here.

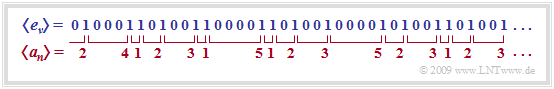

- The "error sequence" $ \langle e_\nu \rangle$ given in the graph

- is obtained by comparing the two binary sequences $ \langle q_\nu \rangle$ and $ \langle v_\nu \rangle$,

- contains only information about the sequence of transmission errors and thus less information than an analog channel model,

- is conveniently approximated by a random process with only a few parameters.

- is obtained by comparing the two binary sequences $ \langle q_\nu \rangle$ and $ \langle v_\nu \rangle$,

$\text{Conclusion:}$ The error sequence $ \langle e_\nu \rangle$ allows statements about the error statistics, for example whether the errors are so-called

- "statistically independent errors", or

- "burst errors".

The following example is intended to illustrate these two error types.

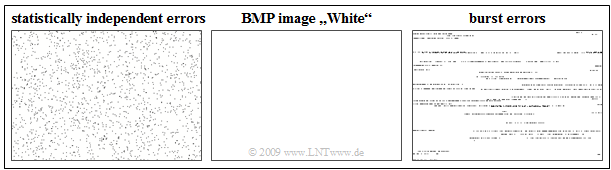

$\text{Example 2:}$ In the graph, we see in the center the BMP image "White" with $300 × 200$ pixels.

- The left image shows the falsification with statistically independent errors ⇒ "BSC model".

- The right image illustrates a burst error channel ⇒ "Gilbert-Elliott model".

Notes:

- A "BMP graphics" is always saved line by line, which can be seen in the error bursts in the right image.

- Mean error probability in both cases: $2.5\%$; on average every $40$th pixel is falsified $($here: white ⇒ black$)$.

Example application of digital channel models

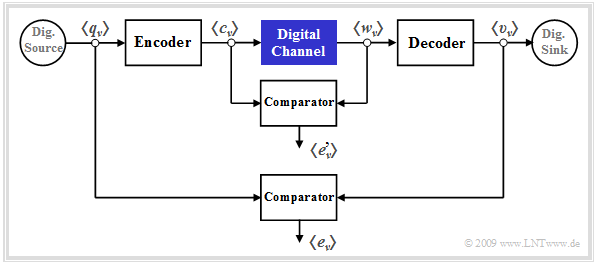

Digital channel models are preferably used for cascaded transmission, as shown in the graph. You can see from this diagram:

- The inner transmission system – consisting of modulator, analog channel, noise, demodulator, receiver filter, decision, clock recovery – is summarized in the block "Digital channel" marked in blue.

- This inner block is characterized exclusively by its error sequence $ \langle e\hspace{0.05cm}'_\nu \rangle$, which refers to its input sequence $ \langle c_\nu \rangle$ and output sequence $ \langle w_\nu \rangle$. It is obvious that this channel model provides less information than a detailed analog model considering all components.

- In contrast, the "outer error sequence" $ \langle e_\nu \rangle$ refers to the source symbol sequence $ \langle q_\nu \rangle$ and the sink symbol sequence $ \langle v_\nu \rangle$ and thus to the overall system including the specific encoding and the decoder on the receiver side.

- The comparison of the two error sequences with and without consideration of encoder/decoder allows conclusions to be drawn about the efficiency of the underlying coding and decoding. These two components are appropriate if and only if the outer comparator indicates fewer errors on average than the inner comparator.

Error sequence and average error probability

$\text{Definition:}$ The transmission behavior of a binary system is completely described by the error sequence $ \langle e_\nu \rangle$:

- \[e_{\nu} = \left\{ \begin{array}{c} 1 \\ 0 \end{array} \right.\quad \begin{array}{*{1}c} {\rm if}\hspace{0.15cm}\upsilon_\nu \ne q_\nu \hspace{0.05cm}, \\ {\rm if}\hspace{0.15cm} \upsilon_\nu = q_\nu \hspace{0.05cm}.\\ \end{array}\]

- From this, the (mean) bit error probability can be calculated as follows:

- \[p_{\rm M} = {\rm E}\big[e \big] = \lim_{N \rightarrow \infty} \frac{1}{N} \sum_{\nu = 1}^{N}e_{\nu}\hspace{0.05cm}.\]

- It is assumed here that the random process generating the errors is "stationary" and "ergodic", so that the error sequence $ \langle e_\nu \rangle$ can also be formally described completely by the random variable $e \in \{0, \ 1\}$. Thus, the transition from time to coulter averaging is allowed.

Note:

- In all other $\rm LNTwww $ books, the "mean bit error probability" is denoted by $p_{\rm B}$.

- To avoid confusion in connection with the "Gilbert–Elliott model", this renaming here is unavoidable.

- We will no longer refer to the bit error probability in the following, but only to the "mean error probability" $p_{\rm M}$.

Error correlation function

$\text{Definition:}$ Another important descriptive quantity of the digital channel models is the error correlation function – abbreviated $\rm ECF$:

- \[\varphi_{e}(k) = {\rm E}\big [e_{\nu} \cdot e_{\nu + k}\big ] = \overline{e_{\nu} \cdot e_{\nu + k} }\hspace{0.05cm}.\]

The error correlation function has the following properties:

- $\varphi_{e}(k) $ indicates the (discrete-time) "auto-correlation function" of the random variable $e$, which is also discrete-time. The sweeping line in the right equation denotes the time averaging.

- The error correlation value $\varphi_{e}(k) $ provides statistical information about two sequence elements that are $k$ apart, e.g. about $e_{\nu}$ and $e_{\nu+ k}$. The intervening elements $e_{\nu+ 1}$, ... , $e_{\nu+ k-1}$ do not affect the $\varphi_{e}(k)$ value.

- For stationary sequences, regardless of the error statistic due to $e \in \{0, \ 1\}$, always holds:

- \[\varphi_{e}(k = 0) = {\rm E}\big[e_{\nu} \cdot e_{\nu}\big] = {\rm E}\big[e^2\big]= {\rm E}\big[e\big]= {\rm Pr}(e = 1)= p_{\rm M}\hspace{0.05cm},\]

- \[\varphi_{e}(k \rightarrow \infty) = {\rm E}\big[e_{\nu}\big] \cdot {\rm E}\big[e_{\nu + k}\big] = p_{\rm M}^2\hspace{0.05cm}.\]

- The error correlation function is an "at least weakly decreasing function". The slower the decay of the $\rm ECF$ values, the longer the memory of the channel and the further the statistical ties of the error sequence.

$\text{Example 3:}$ In a binary transmission, $100$ of the total $N = 10^5$ transmitted binary symbols are falsified, so that the error sequence $ \langle e_\nu \rangle$

- consists of $100$ "ones"

- and $99900$ "zeros".

Thus:

- The mean error probability is $p_{\rm M} =10^{-3}$.

- The error correlation function $\varphi_{e}(k)$ starts at $p_{\rm M} =10^{-3}$ $($for $k = 0)$ and tends towards $p_{\rm M}^2 =10^{-6}$ $($for $k = \to \infty)$ for very large $k$ values.

- So far, no statement can be made about the actual course of $\varphi_{e}(k)$ with the information given here.

Relationship between error sequence and error distance

$\text{Definition:}$ The error distance $a$ is the number of correctly transmitted symbols between two channel errors plus $1$. The sketch illustrates this definition.

Any information about the transmission behavior of the digital channel

- contained in the error sequence $ \langle e_\nu \rangle$

- is also contained in the sequence $ \langle a_n \rangle$ of error distances.

Note: Since the sequences $ \langle e_\nu \rangle$ and $ \langle a_n \rangle$ are not synchronous,

we use different indices $(\nu$ resp. $n)$.

In particular, we can see from the graph above:

- Since the first symbol was transmitted correctly $(e_1 = 0)$ and the second incorrectly $(e_2 = 1)$, the error distance is $a_1 = 2$.

- $a_2 = 4$ indicates that three symbols were transmitted correctly between the first two errors $(e_2 = 1, \ e_6 = 1)$.

- If two errors follow each other directly, the error distance is equal to $1$: $e_6 = 1, \ e_7 = 1$ ⇒ $a_3=1$.

- The event "$a = k$" means simultaneously "$k-1$ error–free symbols between two errors" ⇒ If an error occurred at time $\nu$, the next error follows at time $\nu + k$.

- The set of values of the random variable $a$ is the set of natural numbers in contrast to the binary random variable $e$:

- \[a \in \{ 1, 2, 3, ... \}\hspace{0.05cm}, \hspace{0.5cm}e \in \{ 0, 1 \}\hspace{0.05cm}.\]

- The mean error probability can be determined from both random variables:

- $${\rm E}\big[e \big] = {\rm Pr}(e = 1) =p_{\rm M}\hspace{0.05cm},$$

- $$ {\rm E}\big[a \big] = \sum_{k = 1}^{\infty} k \cdot {\rm Pr}(a = k) = {1}/{p_{\rm M}}\hspace{0.05cm}.$$

$\text{Example 4:}$

- In the abve sketched sequence $16$ of the total $N = 40$ symbols are falsified ⇒ $p_{\rm M} = 0.4$.

- Accordingly, the expected value of the error distances gives

- \[{\rm E}\big[a \big] = 1 \cdot {4}/{16}+ 2 \cdot {5}/{16}+ 3 \cdot {4}/{16}+4 \cdot {1}/{16}+5 \cdot {2}/{16}= 2.5 = {1}/{p_{\rm M} }\hspace{0.05cm}.\]

Error distance distribution

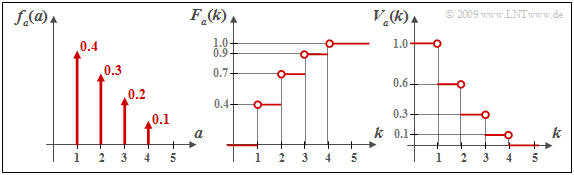

The "probability density function" $\rm (PDF)$ of the discrete random variable $a \in \{1, 2, 3, \text{...}\}$ is composed of an (infinite) sum of Dirac delta functions according to the chapter "PDF definition for discrete random variables" in the book "Stochastic Signal Theory":

- \[f_a(a) = \sum_{k = 1}^{\infty} {\rm Pr}(a = k) \cdot \delta (a-k)\hspace{0.05cm}.\]

- We refer to this particular PDF as the "error distance density function". Based on the error sequence $ \langle e_\nu \rangle$, the probability that the error distance $a$ is exactly equal to $k$ can be expressed by the following conditional probability:

- \[{\rm Pr}(a = k) = {\rm Pr}(e_{\nu + 1} = 0 \hspace{0.15cm}\cap \hspace{0.15cm} \text{...} \hspace{0.15cm}\cap \hspace{0.15cm}\hspace{0.05cm} e_{\nu + k -1} = 0 \hspace{0.15cm}\cap \hspace{0.15cm}e_{\nu + k} = 1 \hspace{0.1cm}| \hspace{0.1cm} e_{\nu } = 1)\hspace{0.05cm}.\]

- In the book "Stochastic Signal Theory" you will also find the definition of the "cumulative distribution function" $\rm (CDF)$ of the discrete random variable $a$:

- \[F_a(k) = {\rm Pr}(a \le k) \hspace{0.05cm}.\]

- This function is obtained from the PDF $f_a(a)$ by integration from $1$ to $k$. The function $F_a(k)$ can take values between $0$ and $1$ $($including these two limits$)$ and is weakly monotonically increasing.

In the context of digital channel models, the literature deviates from this usual definition.

$\text{Definition:}$ Rather, here the error distance distribution $\rm (EDD)$ gives the probability that the error distance $a$ is greater than or equal to $k$:

- \[V_a(k) = {\rm Pr}(a \ge k) = 1 - \sum_{\kappa = 1}^{k} {\rm Pr}(a = \kappa)\hspace{0.05cm}.\]

- In particular:

- $$V_a(k = 1) = 1 \hspace{0.05cm},\hspace{0.5cm} \lim_{k \rightarrow \infty}V_a(k ) = 0 \hspace{0.05cm}.$$

The following relationship holds between the monotonically increasing function $F_a(k)$ and the monotonically decreasing function $V_a(k)$:

- \[F_a(k ) = 1-V_a(k +1) \hspace{0.05cm}.\]

$\text{Example 5:}$ The graph shows in the left sketch an arbitrary discrete error distance density function $f_a(a)$ and the resulting integrated functions.

- $F_a(k ) = {\rm Pr}(a \le k)$ ⇒ middle sketch, as well as

- $V_a(k ) = {\rm Pr}(a \ge k)$ ⇒ right sketch.

For example, for $k = 2$, we obtain:

- \[F_a( k =2 ) = {\rm Pr}(a = 1) + {\rm Pr}(a = 2) \hspace{0.05cm}, \]

- \[\Rightarrow \hspace{0.3cm} F_a( k =2 ) = 1-V_a(k = 3)= 0.7\hspace{0.05cm}, \]

- \[ V_a(k =2 ) = 1 - {\rm Pr}(a = 1) \hspace{0.05cm},\]

- \[\Rightarrow \hspace{0.3cm} V_a(k =2 ) = 1-F_a(k = 1) = 0.6\hspace{0.05cm}.\]

For $k = 4$, the following results are obtained:

- \[F_a(k = 4 ) = {\rm Pr}(a \le 4) = 1 \hspace{0.05cm}, \hspace{0.5cm} V_a(k = 4 ) = {\rm Pr}(a \ge 4)= {\rm Pr}(a = 4) = 0.1 = 1-F_a(k = 3) \hspace{0.05cm}.\]

Exercises for the chapter

Exercise 5.1: Error Distance Distribution

Exercise 5.2: Error Correlation Function